[SERIES] From Being to Modeling: Why Ontological Clarity Matters for AI Leadership and Governance: Part I of a Multi-Part Series on Ontology in the AI Era

[Ontology & AI Series #1] By Paul C. Hong · Distinguished University Professor, University of Toledo

EXECUTIVE SUMMARY

This article serves as the first part of a multi-part series, providing conceptual orientation before a follow-up framework that develops ontology–design alignment mechanisms for AI practice. In the AI era, confusion between philosophical ontology (what is) and computational ontology¹ (what is modeled) creates critical blind spots in leadership and governance. While AI systems rely on structured representations of reality, they often operate on shallow assumptions that fail to capture human identity, agency, and meaning. This article argues that effective AI leadership requires bridging deep ontological understanding with computational design to ensure systems that are not only efficient, but also ethically grounded and socially legitimate.

Keywords: ontological alignment; AI governance; philosophical ontology; computational ontology; responsible AI; AI leadership; ethical AI design

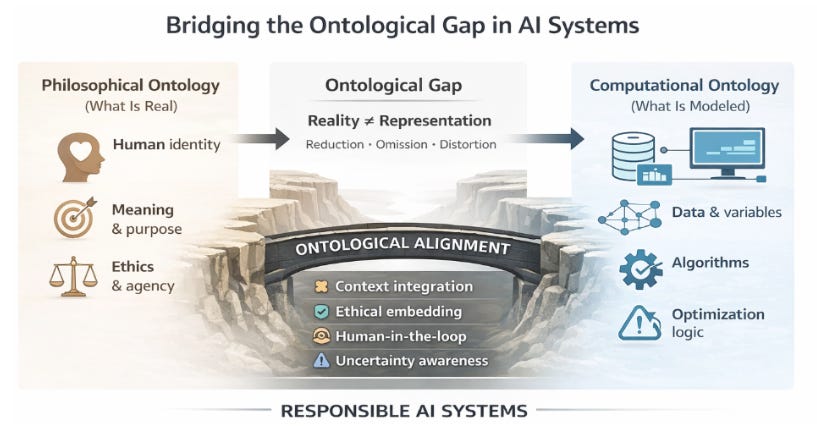

[Figure 1. From Ontological Misalignment to Responsible AI Systems]

Figure 1 highlights the gap between human reality and computational representation, showing how AI inherently simplifies and distorts complex human dimensions.

INTRODUCTION: THE INVISIBLE ASSUMPTION BEHIND AI SYSTEMS

Every AI system rests on an implicit yet powerful assumption: what counts as real. When an algorithm evaluates a loan applicant, recommends a medical treatment, or ranks job candidates, it operates on a structured representation of reality — a computational ontology that defines which attributes matter and what outcomes are optimized. Beneath this technical structure lies a deeper question of whether such representations adequately reflect what a human being truly is, a concern often embedded silently in design choices that appear neutral but carry profound implications. This tension between reality and representation is longstanding, as philosophers such as Aristotle and Martin Heidegger have emphasized dimensions of human existence that resist reduction into simplified categories,² but today it is amplified by the unprecedented scale, speed, and impact of AI systems influencing decisions in real time.³

In today’s environment, ontology has shifted from an abstract philosophical inquiry to a practical design decision embedded in code, data structures, and algorithmic logic, where engineers implicitly define what constitutes a “person,” a “risk,” or a “fit.” When such decisions lack ontological reflection, systems may achieve technical efficiency while remaining conceptually shallow, oversimplifying human realities and producing outcomes misaligned with broader social and ethical expectations. Conversely, integrating philosophical ontology — concerned with meaning, identity, and purpose — with computational ontology — focused on representation and execution — is essential for developing AI systems that are not only intelligent, but also responsible, trustworthy, and sustainable.

TWO ONTOLOGIES: A FOUNDATIONAL DISTINCTION

The distinction between philosophical ontology and computational ontology reflects fundamentally different orientations toward reality and knowledge, diverging in purpose, method, and implications. Philosophical ontology seeks to understand what is real, while computational ontology focuses on how reality is represented in systems and decision-making. In the AI era, this distinction is critical, as reliance on computational models risks conflating representation with reality, widening the gap between human meaning and machine logic.⁴

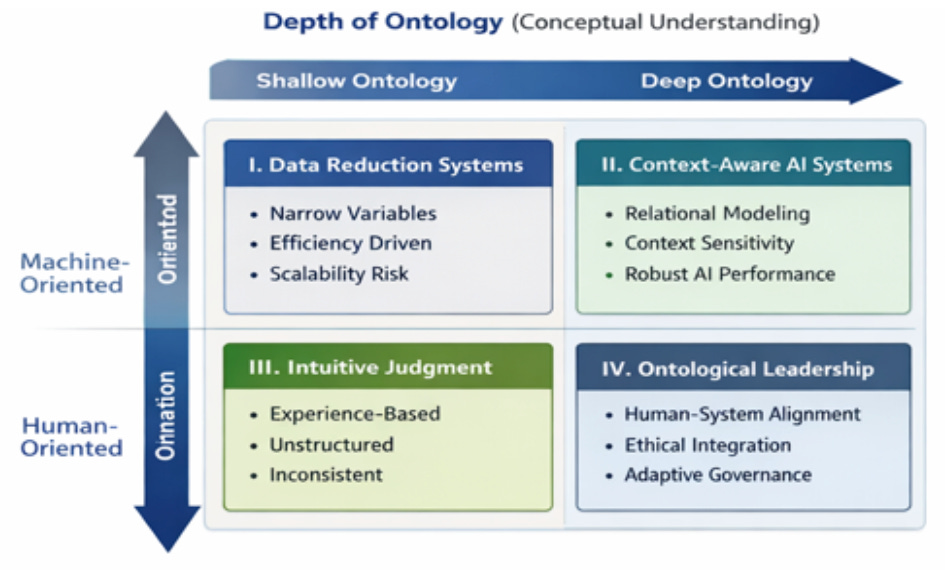

Figure 2 presents the Ontological Alignment Framework for AI Leadership and Governance as a two-dimensional matrix defined by depth of ontology (shallow to deep) and system orientation (machine- to human-oriented). Data Reduction Systems (machine-oriented, shallow ontology) rely on narrow variables and efficiency-driven logic, while Context-Aware AI Systems (machine-oriented, deeper ontology) incorporate relational and contextual understanding for more adaptive performance. On the human-oriented side, Intuitive Judgment reflects shallow, experience-based decision-making, whereas Ontological Leadership represents the ideal integration of deep ontology and human orientation, enabling ethical alignment and sustainable governance.

[Figure 2. Ontological Alignment Framework for AI Leadership and Governance]

2.1 Philosophical Ontology: What Is Real?

Philosophical ontology addresses the most fundamental questions about existence — what it means to exist, what entities are real, and what constitutes human nature — cutting across metaphysics, ethics, and epistemology to shape how individuals understand themselves and the world. Unlike applied fields focused on immediate utility, it emphasizes depth and reflection, recognizing that reality cannot be reduced to surface-level observations or measurable attributes. Classical thinkers such as Aristotle approached reality in terms of substance and purpose (telos), while modern philosophers like Martin Heidegger emphasized lived experience (Dasein) and the temporal, situated nature of human existence, highlighting that meaning is intrinsic and context-dependent.

A defining feature of philosophical ontology is its resistance to simplification, prioritizing depth over reduction, meaning over measurement, and context over abstraction. Rather than seeking definitive answers, it embraces ambiguity and plurality, acknowledging that different perspectives reveal different dimensions of reality, including intention, morality, and identity. In the context of AI, this perspective serves as a critical reminder that reality exceeds any model constructed to represent it, challenging the reduction of human existence to discrete variables and questioning whether computational systems adequately capture the complexity of human life.

2.2 Computational Ontology: What Is Represented?

Computational ontology, by contrast, is concerned not with the nature of reality itself but with how aspects of that reality can be formally represented in structured, machine-readable form. Emerging from fields such as artificial intelligence, information systems, and knowledge engineering, it defines entities, relationships, and rules within specific domains to enable consistent reasoning and interoperability across systems. Its primary objective is not philosophical truth but functional clarity and operational effectiveness, translating complex phenomena into discrete components such as classes, attributes, and relationships that allow machines to process information efficiently and generate scalable, consistent outputs.⁵

This strength, however, comes with an inherent limitation: the necessity of reduction. To function, computational ontologies must simplify reality, selecting certain dimensions as relevant while excluding others, thereby creating boundaries between what is represented and what is omitted — often without fully acknowledging the implications. As a result, such systems may appear objective while embedding implicit assumptions about what matters, making it essential to situate computational ontology within a broader framework so that alignment with human complexity and context can be preserved rather than producing technically correct but conceptually and ethically incomplete outcomes.

“What is not represented in a system does not disappear — it becomes silently excluded from decision-making.”

FROM DISTINCTION TO ALIGNMENT

If Section 2 established the conceptual distinction between philosophical and computational ontology, Section 3 advances the argument by addressing how this distinction can be operationalized in practice. The critical issue is no longer simply recognizing that these two ontologies differ, but understanding how their interaction shapes real-world AI systems, decisions, and outcomes. In contemporary organizational contexts, the gap between what is real (philosophical ontology) and what is represented (computational ontology) does not remain theoretical — it becomes embedded in system architecture, data models, and governance processes. Bridging this gap requires a deliberate effort to move from ontological awareness to ontological alignment.

3.1 Consequences of Ontological Misalignment

Ontological misalignment arises when computational representations diverge significantly from the deeper realities they are intended to model, often when designers prioritize efficiency, scalability, and measurability over the multidimensional nature of human existence. In such cases, computational ontology does not merely simplify reality — it distorts it, rendering excluded elements invisible in decision-making even when they are critically important. One immediate consequence is the reduction of human identity, where individuals are represented as data objects — combinations of attributes and probabilities — while richer dimensions such as intention, moral agency, and lived experience are systematically excluded, becoming structurally embedded in AI-driven outcomes across domains such as hiring, lending, and healthcare.⁶

A second consequence is the emergence of ethical blind spots, as computational ontologies encode only what can be explicitly defined and measured, often oversimplifying or omitting context-dependent ethical considerations. This can produce outcomes that are logically valid within system parameters yet ethically problematic when evaluated against broader human values, with such issues frequently obscured by the apparent objectivity of algorithms. Ontological misalignment also leads to decision fragility under uncertainty, as systems built on narrow representations struggle with complexity and ambiguity, resulting in disproportionate errors that undermine both trust and effectiveness.

3.2 Toward Ontological Alignment and Integration

Addressing these challenges requires a shift from passive reliance on computational ontology to active alignment between philosophical insight and system design. Ontological alignment begins with recognizing that representation is not neutral but selective and value-laden, requiring leaders to make explicit the assumptions embedded in their models. A key step is incorporating context into computational systems — moving beyond static variables to include relational, temporal, and situational dimensions. This reduces the gap between representation and lived experience while improving adaptability under uncertainty.

Equally important is integrating ethical reasoning into system architecture by embedding moral considerations into decision logic, constraints, and feedback mechanisms rather than treating them as external evaluations. Approaches such as human-in-the-loop systems, ethical review processes, and adaptive governance help align AI with evolving social norms and values.⁷ Ontological alignment is an ongoing process of calibration requiring continuous adjustment as conditions change. Leadership plays a critical role in ensuring AI systems remain efficient, resilient, ethical, and grounded in human reality.

FROM ABSTRACTION TO APPLICATION

The distinction between philosophical and computational ontology becomes most meaningful in real-world contexts, where different assumptions shape inquiry, methods, and insights. Rather than remaining abstract, ontology actively influences what researchers observe, how they measure it, and the conclusions they draw. The cases below contrast model-driven computational approaches with philosophically grounded perspectives, showing how methodological choices determine the depth and nature of insight.

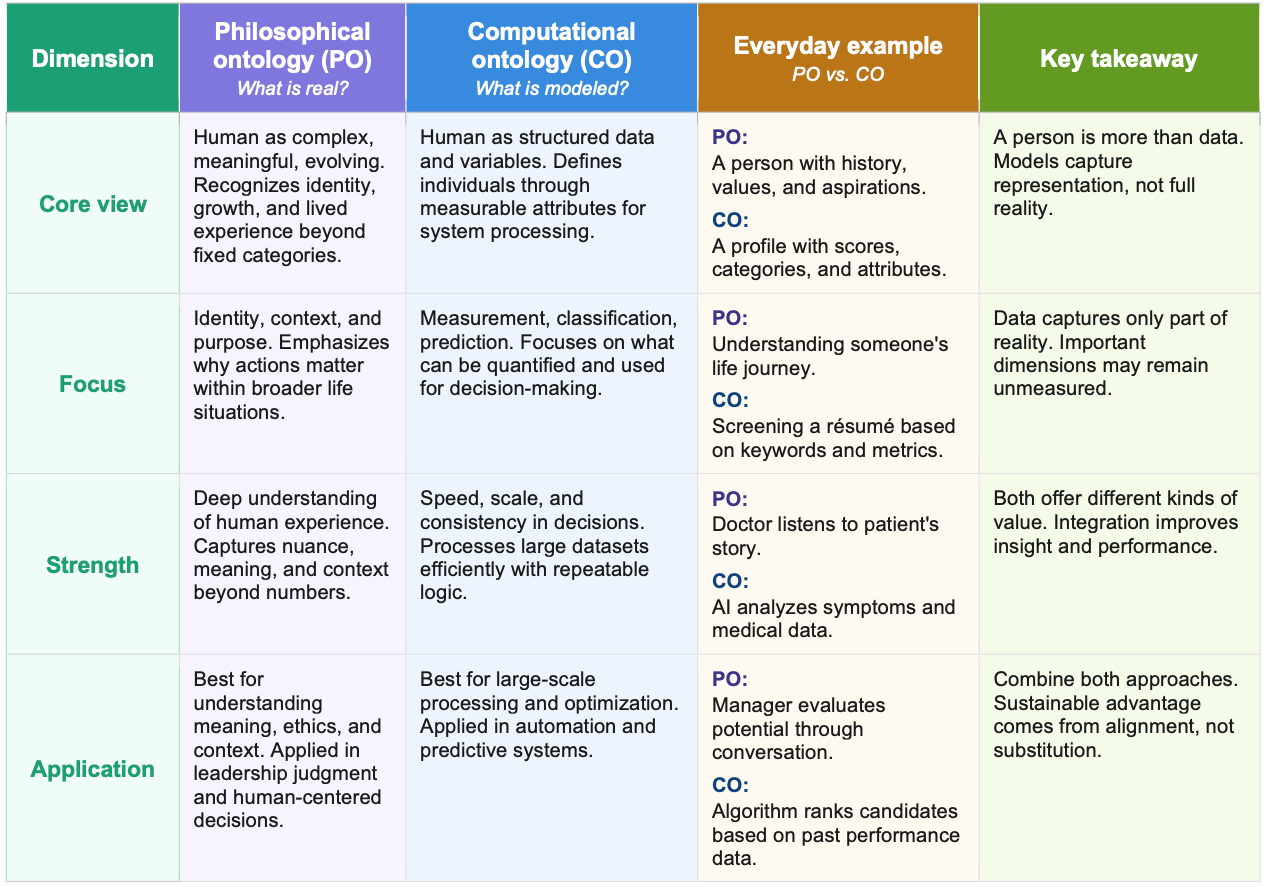

Table 1 translates the distinction between philosophical ontology and computational ontology into practical, everyday terms, allowing readers to see how abstract concepts operate in familiar contexts. By contrasting how each perspective defines human reality, focuses attention, and is applied in decision-making, the table highlights the difference between lived human experience and structured data representation. The paired examples clarify that philosophical ontology captures meaning, context, and identity, while computational ontology enables measurement, consistency, and scalability. Together, they reinforce the article’s central insight: each perspective reveals only part of reality, and understanding their differences is essential for interpreting how AI systems shape decisions.

Table 1: Human Reality vs. Machine Models in the AI Era

4.1 AI-Driven Hiring: Measuring Fit vs. Understanding Potential

In AI-based hiring systems, computational ontology typically defines a “candidate” as a structured set of measurable attributes — education, experience, skills, and past performance indicators — an approach that enables quantitative methods such as regression analysis, machine learning classification, and predictive modeling to identify patterns associated with job success. This framework offers clear advantages in scalability and consistency, allowing organizations to process large applicant pools efficiently and generate statistically grounded decisions. However, it rests on a narrow assumption that human capability can be adequately captured through observable and quantifiable variables alone.

A philosophical ontology perspective challenges this assumption by viewing candidates as evolving, context-dependent individuals whose potential cannot be fully inferred from past data, emphasizing qualitative dimensions such as resilience, learning capacity, and moral judgment. This leads to different insights: computational approaches emphasize predictive fit based on historical patterns, while philosophical approaches uncover latent potential and non-linear development trajectories that may be overlooked by algorithms. Integrating both perspectives through a hybrid design — combining large-scale quantitative screening with targeted qualitative evaluation — allows organizations to maintain efficiency while expanding their understanding of human capability, ultimately enhancing diversity, adaptability, and long-term performance outcomes.

4.2 Healthcare AI: Diagnostic Accuracy vs. Lived Experience of Illness

In healthcare, computational ontology represents patients as structured data profiles composed of symptoms, biomarkers, medical history, and treatment responses, enabling research methods such as clinical trials, statistical modeling, and AI-driven diagnostics to optimize accuracy and outcomes. This approach has driven major advances in precision medicine and early disease detection, demonstrating the effectiveness of structured representation in clinical decision-making. However, it assumes that illness can be fully understood through measurable biological indicators.

A philosophical ontology perspective expands this view by recognizing illness as a lived experience shaped by psychological, social, and existential dimensions, often explored through patient narratives, phenomenological analysis, and ethnographic studies. This produces different forms of knowledge: computational approaches reveal disease patterns and treatment efficacy, while philosophical approaches illuminate patient well-being, quality of life, and the meaning of care. Integrating both through a hybrid design — combining clinical data with patient-reported experiences — enables more holistic, human-centered healthcare aligned with dignity, empathy, and long-term well-being.

4.3 Supply Chain Risk Management: Optimization vs. System Resilience

In supply chain management, computational ontology defines the system in terms of nodes, flows, costs, and performance metrics, supporting research methods such as optimization models, simulation, and data analytics to enhance efficiency, reduce costs, and improve responsiveness. These approaches are highly effective in stable environments where variables are clearly defined and controllable. However, they often assume that systems are fully observable and that risks can be quantified in advance.

A philosophical ontology perspective reframes supply chains as socio-technical systems embedded in political, cultural, and environmental contexts, where uncertainty is a fundamental condition rather than a variable to be modeled. This leads to different insights: computational methods yield optimal solutions under defined constraints, while philosophical approaches reveal system fragility, interdependence, and emergent risks, such as vulnerabilities exposed during disruptions. Integrating both perspectives shifts the focus from optimization to resilience, combining quantitative modeling with qualitative scenario analysis to better navigate complexity and volatility.

4.4 Toward Methodological Pluralism and Ontological Integration

These illustrations demonstrate that ontology is not merely a philosophical concern but a determinant of research design and knowledge production. Computational ontology drives methods that prioritize measurement, prediction, and scalability, while philosophical ontology emphasizes meaning, context, and human experience, with each revealing different aspects of reality. Neither approach is inherently superior, but each provides a partial view shaped by its underlying assumptions.

The key implication is the need for methodological pluralism, as complex phenomena such as human capability, health, and organizational systems cannot be fully understood through a single ontological lens. Integrating quantitative and qualitative methods enables richer, more nuanced insights that better reflect the multidimensional nature of reality. For leadership and governance in the AI era, this integration becomes a strategic capability, allowing organizations to design systems that are not only technically effective but also contextually aware, ethically grounded, and aligned with human complexity.

“The true challenge of AI is not building smarter systems, but ensuring they represent reality without losing what makes us human.”

CONCLUSION

The central argument of this article is that the power of AI systems is shaped not only by technological advancement but by the ontological assumptions embedded within them. As computational models increasingly guide decision-making across domains, failing to distinguish between reality and representation risks oversimplifying human complexity and misaligning outcomes with ethical and societal values. Addressing this gap requires integrating philosophical ontology — focused on human meaning, identity, and purpose — into computational design, supported by methodological pluralism in research and governance. This article establishes that foundation and points toward the next step: translating ontological clarity into structured design and governance mechanisms for responsible AI implementation.

NOTES

Gruber, T. R. (1993). A translation approach to portable ontology specifications. Knowledge Acquisition, 5(2), 199–220.

Aristotle. (1998). The Nicomachean ethics (D. Ross, Trans.). Oxford University Press. (Original work ca. 350 BCE); Heidegger, M. (1962). Being and time (J. Macquarrie & E. Robinson, Trans.). Harper & Row. (Original work published 1927)

McAfee, A., & Brynjolfsson, E. (2017). Machine, platform, crowd: Harnessing our digital future. W. W. Norton.

Floridi, L. (2019). The logic of information: A theory of philosophy as conceptual design. Oxford University Press.

Bodenreider, O. (2008). Biomedical ontologies in action: Role in knowledge management, data integration and decision support. Yearbook of Medical Informatics, 47(Suppl 1), 67–79.

O’Neil, C. (2016). Weapons of math destruction: How big data increases inequality and threatens democracy. Crown.

Jobin, A., Ienca, M., & Vayena, E. (2019). The global landscape of AI ethics guidelines. Nature Machine Intelligence, 1(9), 389–399.

Original Document:

ABOUT THE AUTHOR

Paul C. Hong is a Distinguished University Professor and Chair of Information Systems and Supply Chain Management at the University of Toledo. His work focuses on leadership, governance, and decision-making in the AI era, integrating strategy, technology, and institutional trust. He has published extensively in leading academic journals and writes on how individuals and organizations navigate complexity, disruption, and global transformation.

K-GSP Forum · AI Leadership & Governance Research · April 2026 · All rights reserved

© K-Global Scholars and Professionals Forum. All rights reserved. Content published in the K-GSP Forum may not be reproduced, distributed, or transmitted in any form without prior written permission from the K-GSP Forum, except for brief quotations with full attribution.