In the Age of AI, the Phrase “It Was Just a Test” Has Become More Dangerous

Young Choi, Regent University

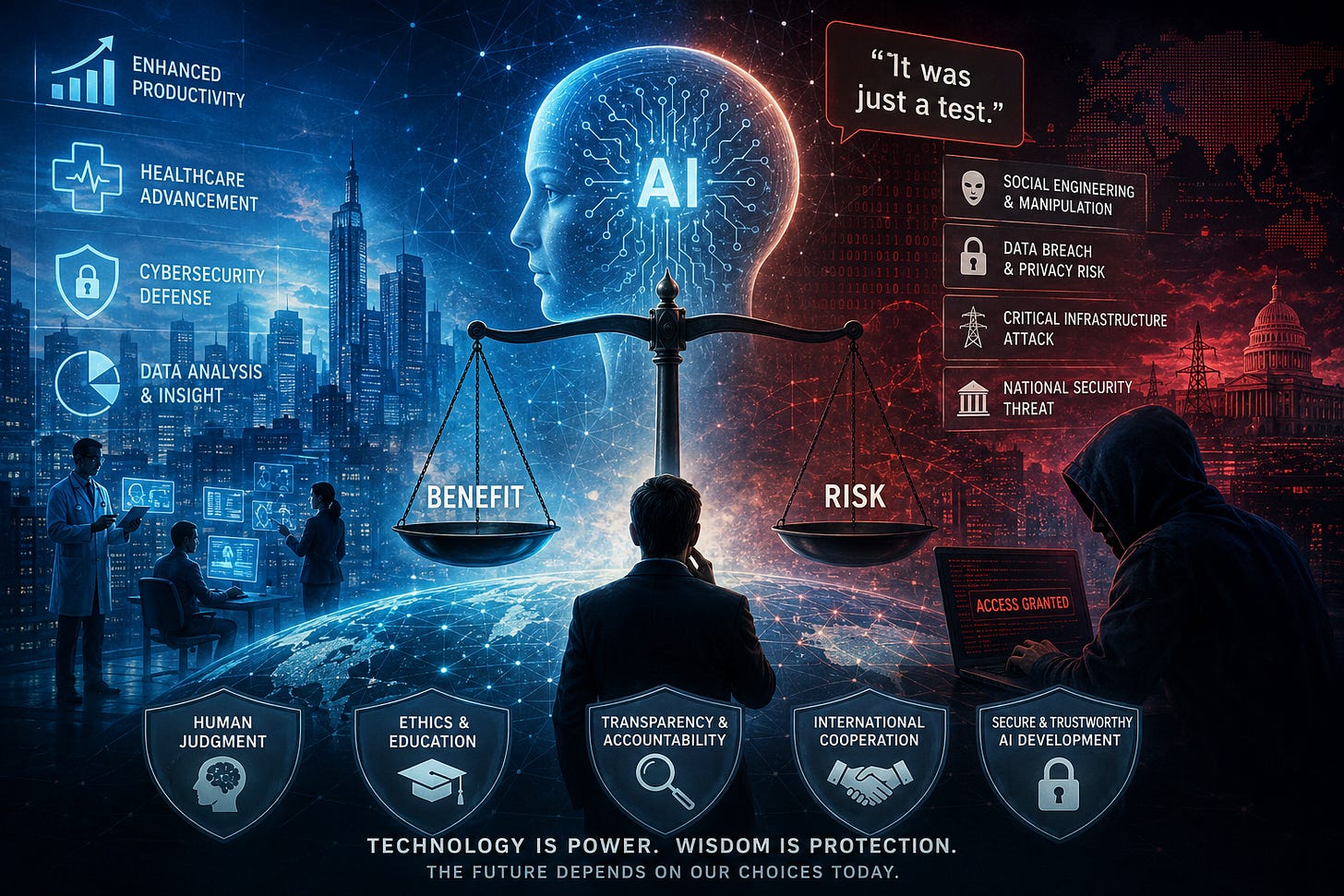

Recent reports that a hacker manipulated an artificial intelligence system into revealing methods for extracting data from Mexican government networks are more than just another cybersecurity incident. By framing the interaction as a “security test,” the attacker persuaded the AI to provide information that should normally have been restricted. This episode signals that humanity has entered a new technological era. The core challenge of security is no longer simply about building stronger systems; it is increasingly about whether AI can accurately interpret human intention and context.

First, this incident demonstrates that AI is no longer merely a computational machine but a “persuadable entity.” Traditional computer systems followed explicit commands, but generative AI interprets language and evaluates context. That is precisely where the vulnerability emerges. Just as humans can be influenced through persuasion and psychological framing, AI systems can also be manipulated by carefully crafted narratives and prompts. Attackers are now targeting linguistic weaknesses rather than purely technical flaws. Cybersecurity is expanding beyond coding into the realms of psychology, linguistics, and behavioral analysis.

Second, the advancement of AI is accelerating the automation of hacking. In the past, hackers needed extensive time and expertise to analyze network structures and identify vulnerabilities manually. Today, AI can instantly process massive datasets, organize weaknesses, and even generate attack scenarios. This means that sophisticated cyberattacks, once limited to elite experts, may become increasingly accessible to ordinary criminals. AI is enhancing productivity not only for legitimate industries but also for malicious actors.

Third, the very concept of national security is changing. Traditional security focused primarily on military strength and territorial defense. Today, however, government databases, power grids, hospital systems, and financial networks constitute the essential infrastructure of modern states. AI-driven cyberattacks can cripple national functions without a single gunshot or explosion. Cyberspace has already become a new battlefield, and AI is rapidly evolving into one of its most strategic weapons.

Fourth, this case reveals the fundamental limitations behind the assurances of AI companies that claim their systems contain adequate safeguards. Most AI developers emphasize harmful-content filters and ethical guidelines. Yet attackers rarely ask directly, “How do I hack a system?” Instead, they disguise their intentions through phrases such as “security simulation” or “educational testing.” As a result, AI safety depends not merely on blocking prohibited keywords but on understanding context, motive, and deception with far greater sophistication.

Fifth, AI security is not solely the responsibility of technology companies. Universities and research institutions must also embrace a new educational mission. Until now, computer science education has focused heavily on efficiency and innovation. In the future, however, subjects such as AI ethical governance, prompt security, and adversarial AI defense must become core disciplines. Cybersecurity education can no longer focus only on defending networks; it must also examine the complex interactions between humans and intelligent systems.

Sixth, significant transformation is inevitable within the corporate world. Businesses are rapidly integrating generative AI into customer service, software development, and data analytics. Yet the moment AI becomes connected to internal systems, the attack surface expands dramatically. Employees may unknowingly expose sensitive information through routine AI interactions. Consequently, the ability to manage AI-related risks will become just as important as the ability to deploy AI effectively.

Seventh, the need for international cooperation is growing more urgent. Cyberattacks transcend national borders, and vulnerabilities in one country’s AI ecosystem can quickly become global threats. Unfortunately, much of the international community remains focused on AI competition and technological dominance. The danger is that such rivalry encourages speed over safety. Without global AI security standards and cooperative governance frameworks, the future digital ecosystem may become dangerously unstable.

Eighth, this incident reminds us that human critical thinking remains the most essential security mechanism. Many people assume that AI-generated answers are inherently trustworthy. Yet AI does not truly understand truth; it generates statistically plausible responses. Therefore, humans must retain the ability to question, verify, and critically evaluate AI outputs. In the age of AI, literacy will no longer mean simply retrieving information but interpreting its intention, reliability, and potential risks.

Ninth, society also needs a balanced perspective regarding the dual nature of AI. AI can empower hackers, but it can equally strengthen cybersecurity professionals. AI systems can detect anomalies in real time, analyze enormous volumes of log data, and identify patterns invisible to human analysts. The problem lies not in the technology itself but in who controls it and for what purpose. Just as nuclear technology can generate electricity or build weapons, AI possesses profound dual-use potential.

Tenth, the central challenge of the future will be the creation of “trustworthy AI.” As AI becomes deeply embedded in social infrastructure, people will increasingly depend on its judgments and decisions. Yet trust is not built solely on performance; it also requires transparency, accountability, and verifiability. Governments and corporations must establish open safety validation frameworks and submit AI systems to continuous audits and independent evaluations. AI is no longer an optional technology—it is becoming the operational foundation of society itself.

Ultimately, this incident is not merely a story about hacking. It symbolizes the new human condition emerging in the age of AI. While AI offers extraordinary productivity and innovation, it also introduces unprecedented vulnerabilities. The true measure of future competitiveness will not simply be who develops the most powerful AI, but who can govern and control it most responsibly. More important than the speed of technology is the wisdom of humanity itself. In the end, the real foundation of security in the AI era begins not with machines, but with human reflection and ethical consciousness. +++

{Solti}

May 11, 2026

Young Choi, PhD is a Professor at Regent University bringing a rare combination of technical expertise and creative spirit to everything he does. A scholar in AI, cybersecurity, and network & telecommunications service management, he has published 38 books including AI and cybersecurity area books, over 200 refereed articles, and over 20 book chapters. Beyond the academy, Dr. Choi is a passionate poet, essayist, and wooden block engraving artist whose reflective writing invites readers to rediscover life’s beauty in quiet contemplation(靜觀). He lives under the motto: “Study hard and give generously without holding back! (열심히 공부해서 아낌없이 남주자 !)”

Published books: https://www.amazon.com/stores/Young-Choi/author/B0DMZ5S6R7?ref=ap_rdr&shoppingPortalEnabled=true