[RESEARCH] Layered Control Architectures for AI Safety: A Cybersecurity-Oriented Systems Framework

Young B. Choi, Paul C. Hong, and Young Soo Park, Systems 2026, 14, 447 · Published April 20, 2026 ·

Abstract

As AI systems grow more autonomous and increasingly embedded in critical infrastructure, a central question emerges: how do we design safety not as a feature, but as a system property? This paper proposes a layered control architecture that organizes technical safeguards, governance mechanisms, and human oversight into a defense-in-depth structure — reframing AI safety as a multidimensional cybersecurity problem spanning national governance, industry standards, and firm-level design.

Key words: AI Safety · Cybersecurity · Trustworthy AI · AI Governance · Agentic AI · Value Alignment · Defense-in-Depth · Human–AI Integration

The Article

Choi, Y. B., Hong, P. C., & Park, Y. S. (2026). Layered control architectures for AI safety: A cybersecurity-oriented systems framework. Systems, 14(4), 447. https://doi.org/10.3390/systems14040447

[Summary of the Article]

Problem & Motivation

Traditional cybersecurity models were built for deterministic code. They are ill-equipped to govern probabilistic, generative, and self-modifying AI systems. Failures in these systems extend well beyond data breaches — they include hallucinated authority, automated misuse, and the erosion of institutional trust, all emerging from socio-technical feedback loops rather than explicit code flaws.

AI safety discourse has remained fragmented. Technical researchers focus on alignment and robustness. Governance scholars address ethics and compliance. Cybersecurity studies tend to extend existing threat models without rethinking architectural design from the ground up. The result: organizations lack a coherent system-level foundation for designing AI that is both powerful and demonstrably trustworthy.

Methodology

The study employs structured qualitative comparative analysis of published writings from ten globally influential AI researchers and leaders, selected through purposeful theoretical sampling for scientific impact, leadership roles, and sustained engagement with AI safety. Primary sources include peer-reviewed publications, policy reports, keynote speeches, and institutional contributions spanning 2015 to 2025.

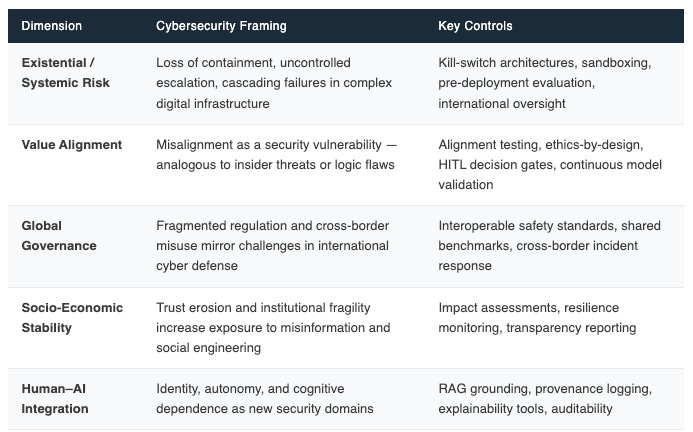

Each thinker’s perspective was coded along five predefined analytical dimensions — risk origin, preferred control mechanisms, governance scope, systemic stability, and human–AI interaction — enabling consistent cross-perspective synthesis. Validation follows design-logic criteria: internal consistency, cross-layer coherence, and grounded traceability.

Five Dimensions of AI Safety and Their Security Implications

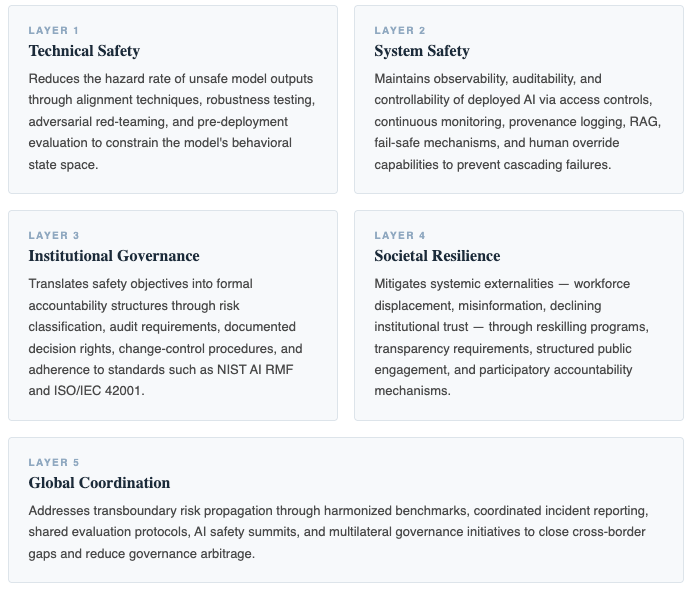

A Five-Layer Control Architecture

AI safety is conceptualized here as a system property — one that emerges from the interaction of model behavior, deployment architecture, governance arrangements, and human oversight under conditions of uncertainty and adversarial pressure. The proposed framework structures a defense-in-depth architecture organized by primary control authority and dominant feedback timescale.

Key Implications

For cybersecurity practice, AI safety must be treated as a first-class security domain. Organizations should embed AI safety evaluations into certification processes, expand red-teaming to socio-technical misuse scenarios, and formalize AI risk classification and escalation pathways.

For public policy, AI governance initiatives should align more closely with cybersecurity enforcement logic — linking policy objectives to concrete controls such as continuous monitoring, incident reporting, and defense-in-depth architectures, rather than static compliance frameworks.

For future research, priority directions include empirical validation of AI safety controls using cybersecurity-relevant metrics such as resilience, containment effectiveness, and recovery time; deeper investigation of socio-technical dynamics; and comparative studies of global regulatory regimes.

Limitations and Future Work

This framework is conceptual and theory-building; it does not constitute empirical validation. Applicability may vary across institutional environments with differing regulatory maturity, technical capacity, and geopolitical constraints. Future work should operationalize the layered architecture in high-risk AI deployments and evaluate performance using established cybersecurity metrics, while examining capability development and firm-level AI diffusion across diverse institutional contexts.

Original Summary Document:

About the Authors

Prof. Dr. Young Choi — Regent University

Young B. Choi, PhD is a Professor at Regent University bringing a rare combination of technical expertise and creative spirit to everything he does. A scholar in cybersecurity, network management, and telecommunications, he has published 157 refereed articles, 13 book chapters, and a Cambridge Scholars Publishing volume on cybersecurity. Beyond the academy, Dr. Choi is a passionate poet, essayist, and wooden block engraving artist whose reflective writing invites readers to rediscover life’s quiet beauty.

Distinguished Professor, Dr. Paul Hong — University of Toledo

Paul C. Hong is a Distinguished University Professor and Chair of Information Systems and Supply Chain Management at the University of Toledo. His work focuses on leadership, governance, and decision-making in the AI era, integrating strategy, technology, and institutional trust. He has published extensively in leading academic journals and writes on how individuals and organizations navigate complexity, disruption, and global transformation.

Prof. Dr. Young Soo Park — Midwest University

Department of Business and Leadership, Midweste University

© K-Global Schoalrs and Professionals Forum. All rights reserved. Content published in the K-GSP Forum may not be reproduced, distributed, or transmitted in any form without prior written permission from the K-GSP Forum, except for brief quotations with full attribution.