[SERIES] From Being to System: Rethinking Ontology in the Age of AI

Part III of a Multi-Part Series on Ontology and AI LeadershipBy Paul C. Hong · Distinguished University Professor, University of Toledo

EXECUTIVE SUMMARY

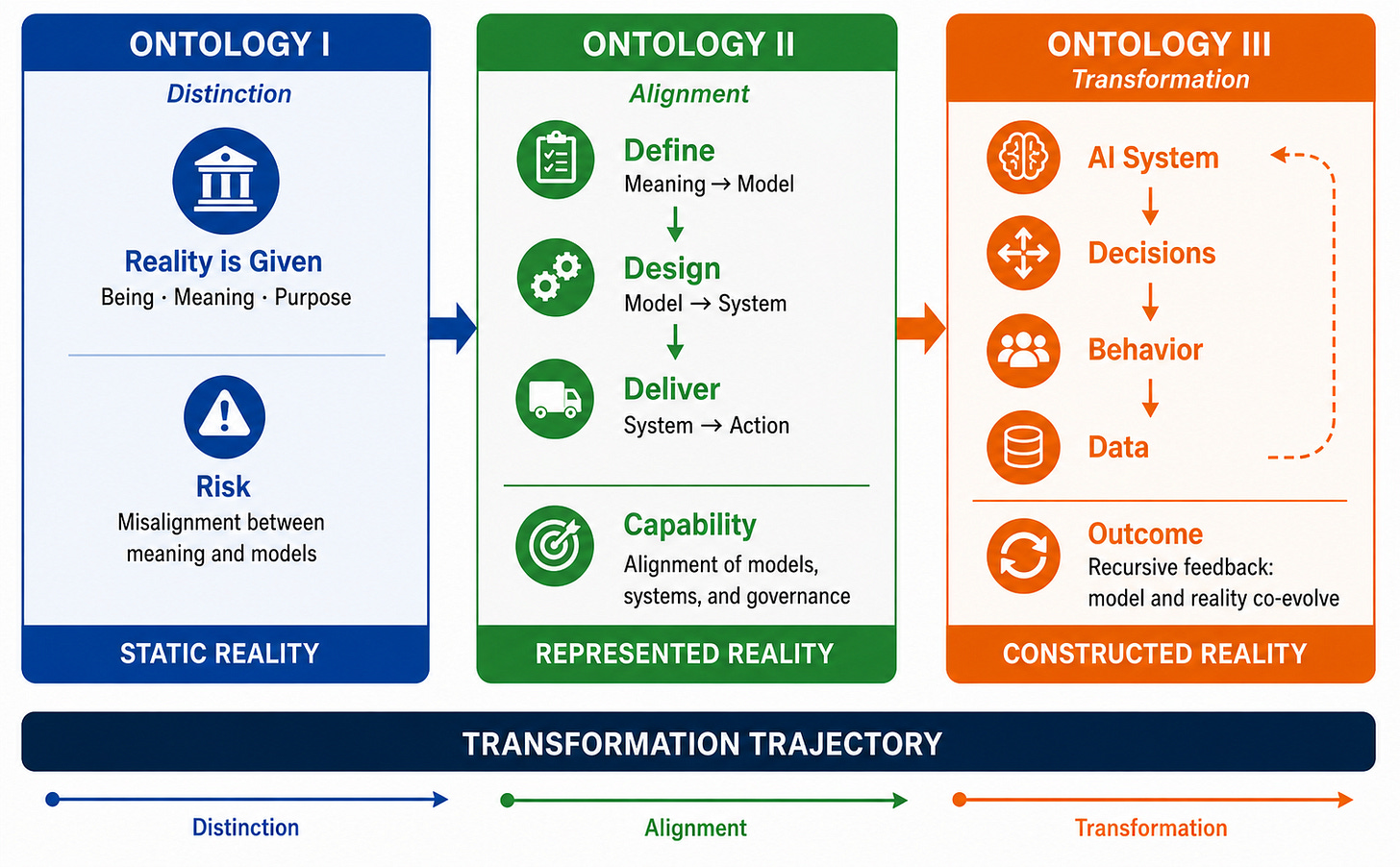

In an AI-mediated world, understanding reality requires understanding how it is constructed, not merely represented. Part III builds on earlier distinctions between philosophical and computational ontology to examine how ontology itself transforms in the age of AI. As intelligent systems increasingly mediate and act upon reality, ontology shifts from describing what is to explaining how reality is constructed and reproduced. It argues that responsible AI leadership requires Ontology III, integrating being, modeling, and system feedback to enable adaptive, reflexive, and human-centered systems.

Keywords: Ontology III; Socio-Technical Systems; AI Mediation; Ontological Transformation; Human-Centered AI Governance

Figure 1. Evolution of Ontology in the Age of AI: From Being to System.

Progression from foundational distinction (I) through strcutured alignment mechanisms (II) to recursive, AI-mediated reality constrcution (III)

1. INTRODUCTION

In a recent geopolitical disruption affecting global energy flows, forcing shipments to reroute, stranding tankers, and driving sharp increases in energy and transportation costs, AI-driven supply chain systems responded in real time, recalculating routes, reprioritizing suppliers, and redefining what counted as “efficient” or “resilient” operations under crisis conditions. Yet as firms adapted to these system-generated decisions, supplier behavior, pricing structures, and even market expectations began to reorganize around the logic of the models themselves. In this moment, AI systems did not simply reflect disruption — they actively shaped the emerging reality of global trade, revealing that the boundary between representation and reality is increasingly blurred.

Building on prior alignment frameworks developed in Part II, this article moves beyond alignment to examine how AI systems construct reality. As intelligent systems increasingly mediate, model, and act upon reality, ontology is no longer a static question of what is, but a dynamic process of how reality is constructed, stabilized, and reproduced through system interactions. This shift reflects a deeper transformation in which representation does not merely mirror reality but actively shapes it across organizational, social, and technological domains (Floridi, 2014; Orlikowski & Scott, 2015). In this context, AI systems become not only tools of analysis but agents in the ongoing formation of what counts as reality in practice (Faraj et al., 2018).

2. FROM ALIGNMENT TO ONTOLOGICAL TRANSFORMATION

AI systems do not merely represent reality — they recursively shape, stabilize, and transform it through continuous interaction between models, decisions, and human behavior.

2.1. From Representation to Construction: The Ontological Shift

A retail platform initially recommends products based on a customer’s past purchases, appearing to reflect existing preferences. Over time, however, these recommendations begin to guide behavior: users purchase suggested items, suppliers adjust inventory, and marketing strategies align with algorithmic predictions. What began as a representation of demand becomes a mechanism that actively organizes it. This progression from Ontology I and II to Ontology III marks a shift from modeling reality to participating in its formation, where the central question is not how accurately systems reflect the world, but how they shape it (Kallinikos et al., 2013; Orlikowski & Scott, 2015).

This shift becomes evident when system outputs inform decisions and institutional practices, redefining what counts as demand, risk, or performance. As these definitions are enacted, they stabilize into taken-for-granted realities. Model assumptions and limitations do not remain abstract; they are embedded into the environments systems influence, extending their effects across organizational and market contexts. Over time, this produces self-reinforcing dynamics in which system outputs help generate the conditions that sustain them. Ontology, in this context, is no longer a static description of what exists, but an ongoing process through which reality is constructed and maintained.

2.2. Recursive Systems and Feedback Loops: The Logic of Ontology III

A navigation app directs drivers away from congested highways toward alternative routes. As more drivers follow these recommendations, new congestion emerges in previously quiet neighborhoods, prompting the system to update its guidance once again. Traffic patterns begin to reflect not only underlying conditions but also the collective response to algorithmic direction, illustrating how “traffic reality” is increasingly co-produced by the system itself. At the core of Ontology III is this logic of recursive feedback loops connecting representation, decision, and behavior, where AI systems influence human action and are in turn reshaped by the data those actions generate.

This recursive interaction reflects broader insights from research on algorithmic organizing, where learning systems continuously adapt based on the data they produce and co-evolve with the social contexts in which they operate (Faraj et al., 2018; Leonardi, 2021). Inputs are constantly reshaped by prior outputs, creating path dependency in which early design choices can scale into enduring systemic patterns. The presence of such feedback loops amplifies both strengths and weaknesses, reinforcing resilience and efficiency in well-aligned systems while magnifying bias and unintended consequences in misaligned ones. These dynamics underscore the necessity of moving beyond linear optimization toward continuous, adaptive system stewardship within Ontology III.

2.3. Continuous Ontological Calibration: Leadership in Dynamic Systems

During a healthcare crisis, a hospital deploys an AI system to prioritize patient care based on clinical urgency. Initially, the system improves efficiency, but clinicians soon observe that vulnerable populations are consistently deprioritized due to incomplete or biased data. The hospital responds by revising the model, incorporating new variables, and reintroducing human judgment into decision processes, recognizing that system outputs must be continuously adjusted as conditions evolve. This scenario illustrates why Ontology III requires continuous ontological calibration rather than one-time alignment, as feedback loops reshape both data and outcomes in dynamic environments.

Continuous ontological calibration integrates technical monitoring with ethical reflection, requiring leaders to identify discrepancies between representation and lived reality and adjust systems accordingly. Beyond technical performance, leaders must evaluate whether outcomes align with broader goals such as fairness, trust, and human well-being, linking system performance with moral accountability. As system outputs increasingly shape institutional practices and human behavior, calibration becomes an ongoing responsibility rather than a fixed objective. This reinforces the need for continuous interpretation and adjustment, consistent with the view that digital systems produce “informational environments” that redefine how reality is experienced and governed (Floridi, 2014).

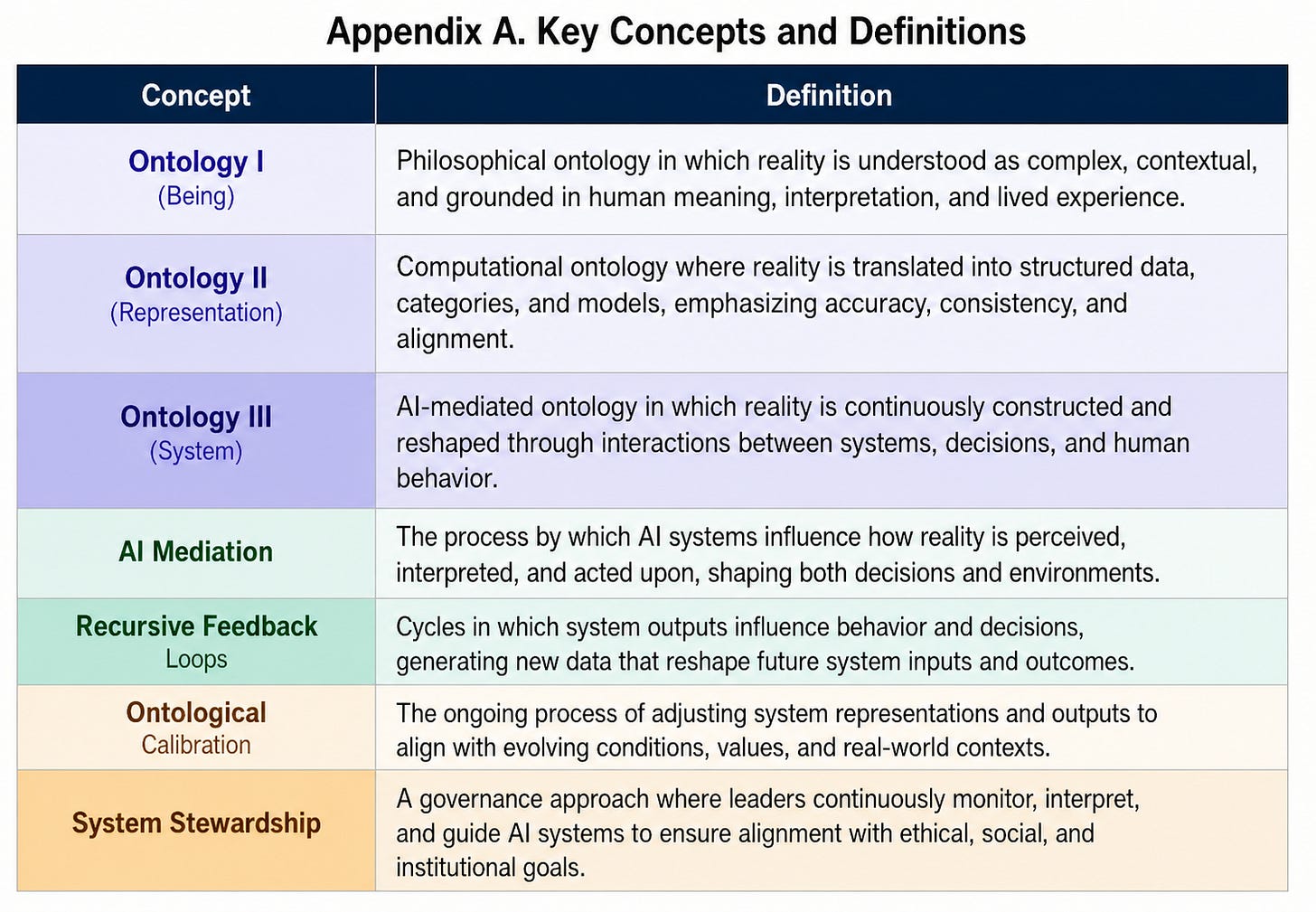

As highlighted in Table 1, the shift from representation to construction requires governance models that move beyond static oversight toward ongoing engagement with system effects.

Table 1. Evolution of Ontology: From Being to System

3. FROM ONTOLOGICAL EVOLUTION TO SYSTEM DESIGN AND GOVERNANCE

The transition from Ontology I to II and III reframes AI not as a tool for representing reality, but as a system that actively constructs and reshapes it, requiring new approaches to design and governance.

3.1. From Representation to Construction: Implications for System Design

A recommendation system initially predicts user preferences based on historical data, reflecting the Ontology II assumption that reality can be accurately represented through structured models. Under an Ontology II lens, this appears as model bias; under Ontology III, it is a self-reinforcing system dynamic. Over time, however, those recommendations begin to influence user choices, supplier behavior, and market dynamics, shifting the system toward Ontology III, where outputs actively shape the conditions they model. This progression illustrates the movement captured in Table 1, where the focus transitions from representing reality to participating in its construction. System design must therefore account not only for accuracy, but for how outputs influence future inputs and reshape the environment.

Design implications follow directly from this ontological shift. Under Ontology II, systems are optimized for consistency, stability, and alignment with predefined categories, assuming relatively fixed relationships between variables. In contrast, Ontology III requires adaptive architectures that recognize recursive feedback loops and evolving contexts. Systems must incorporate mechanisms for monitoring downstream effects, detecting divergence between intended and actual outcomes, and recalibrating models accordingly. Designing AI thus becomes an ongoing engagement with dynamic reality, where systems are not passive mirrors but active participants in shaping behavioral and institutional patterns.

3.2. From Control to Stewardship: Implications for Governance and Leadership

An AI-driven hiring system improves efficiency by identifying candidates based on historical success patterns, consistent with Ontology II’s emphasis on structured representation and decision optimization. Over time, however, the system reinforces existing biases as its outputs influence hiring decisions and generate new data that validate prior assumptions. This recursive dynamic reflects Ontology III, where systems co-produce organizational outcomes through continuous interaction.

Governance in Ontology III therefore becomes a form of continuous stewardship. Rather than relying on periodic audits or compliance checks, leaders must monitor how system outputs reshape behavior, institutional practices, and decision environments over time. This requires distributed accountability across designers, managers, and stakeholders, along with mechanisms for transparency, explainability, and participatory oversight. Leadership is central to this process, ensuring that systems remain aligned with human values, trust, and purpose as they evolve. In this way, governance transforms from rule enforcement into adaptive responsibility, linking system performance with ethical and societal outcomes.

4. CASE ILLUSTRATIONS: ONTOLOGY III IN PRACTICE

The abstract shift from representation to construction becomes most visible when observing how AI systems operate in real-world settings. Across industries, systems that begin as tools for prediction increasingly shape behavior, decisions, and institutional outcomes. These case illustrations demonstrate how Ontology III unfolds in practice, showing that AI systems do not merely reflect reality — they actively participate in creating it. Understanding this shift is essential for designing systems and governance approaches that remain adaptive, responsible, and grounded in human meaning.

4.1. Designing for Constructed Reality: A Case Illustration

A global e-commerce platform initially uses AI to recommend products based on customers’ past purchases and browsing behavior, appearing to reflect existing preferences. Over time, however, these recommendations begin to shape purchasing decisions, as users increasingly select suggested items rather than independently exploring alternatives. Suppliers adjust inventory toward trending products, and marketing strategies align with algorithmic insights, transforming the system from a predictive tool into an active driver of demand. This shift from representation to construction reflects broader dynamics in digital systems, where outputs influence behavior and institutional practices, reinforcing the view that reality is co-produced through socio-technical interaction (Kallinikos et al., 2013; Orlikowski & Scott, 2015). A similar pattern emerges in navigation systems, where algorithmic rerouting initially reduces congestion but ultimately reshapes traffic patterns as collective behavior responds to system guidance.

These examples underscore a central implication of Ontology III: AI systems must be designed with awareness that their outputs actively shape the realities they model. Traditional system design emphasizes accuracy and alignment between model and data, assuming relatively stable environments. In contrast, recursive systems require designers to anticipate how predictions alter future behavior and data patterns, introducing feedback sensitivity into system architecture. This aligns with research on algorithmic organizing, where systems continuously adapt based on the data they produce and co-evolve with their environments (Faraj et al., 2018; Leonardi, 2021). Designing AI therefore becomes an ongoing process of engagement with dynamic reality, requiring continuous monitoring, recalibration, and reflexivity rather than static optimization.

4.2. Governing Recursive Systems: A Case Illustration

A financial institution deploys an AI-based credit scoring system to streamline loan approvals and improve fairness, yet over time certain groups remain systematically disadvantaged — not due to explicit bias in the algorithm, but because historical inequalities embedded in the data are reinforced through repeated use. As lending decisions generate new data, these patterns persist, creating a self-reinforcing cycle. Similar dynamics arise in workforce management systems, where employees adapt their behavior to align with measurable performance indicators, often at the expense of less visible contributions such as collaboration or creativity. These cases illustrate how AI systems reshape not only decisions but also the underlying definitions of value, reinforcing the view that outcomes are co-produced through recursive interaction between systems and social contexts (Faraj et al., 2018; Orlikowski & Scott, 2015).

These dynamics expose the limitations of traditional governance approaches based on control, compliance, and periodic auditing. In recursive systems, where outputs continuously reshape inputs, governance becomes a moving target that cannot be addressed through one-time interventions. Instead, governance must shift toward continuous stewardship, requiring leaders to monitor how system outputs influence behavior, institutional practices, and social outcomes over time. This perspective aligns with the concept of informational environments, where digital systems reshape how reality is experienced and governed (Floridi, 2014). Effective governance therefore depends on distributed accountability, transparency, and human-centered leadership that ensures evolving systems remain aligned with broader values such as fairness, trust, and societal well-being.

4.3. Key Takeaways

Together, these insights show that in the age of AI, the central challenge is not representing reality accurately, but understanding how systems participate in constructing and reshaping it over time.

AI shifts ontology from representation to construction — Reality is no longer passively mirrored by models but actively co-produced through system outputs and human responses. As AI-generated decisions influence behavior and data, the boundary between model and reality dissolves into continuous interaction.

Recursive feedback loops redefine system dynamics — Outputs feed back into inputs, creating evolving patterns that shape future decisions and conditions. These loops introduce path dependency and amplification, where small assumptions can scale into systemic outcomes over time.

Leadership must move from control to ontological stewardship — Managing AI systems requires ongoing interpretation of how they shape meaning, behavior, and institutional reality. Continuous calibration ensures that systems remain aligned with human values, trust, and purpose in dynamic environments.

5. CONCLUSION

This study advances the argument that in the age of AI, ontology is no longer limited to representing reality but increasingly involves its construction through recursive interaction between systems, decisions, and human behavior. By introducing Ontology III, the article highlights how intelligent systems participate in shaping organizational practices, institutional structures, and the meanings that guide human action. As a result, the central challenge shifts from achieving static alignment to sustaining dynamic coherence in continuously evolving environments.

The findings underscore that effective AI design and governance require adaptive architectures, continuous ontological calibration, and stewardship-based leadership. Systems must be evaluated not only for accuracy and efficiency, but for their capacity to support trust, fairness, and human purpose as they reshape the contexts in which they operate. Leadership plays a critical role in interpreting system impacts and ensuring that technological capabilities remain aligned with broader societal values.

As AI systems become increasingly embedded in everyday life, their influence extends beyond decision-making into the ongoing formation of reality itself. Recognizing this ontological shift is essential for navigating complexity, uncertainty, and unintended consequences in socio-technical systems. AI does not simply model reality — it participates in constructing it, calling for a new form of leadership grounded in responsibility, reflexivity, and human meaning (Floridi, 2014; Bostrom, 2014). Future research should examine how Ontology III can be operationalized through measurable system-level indicators of reflexivity and alignment.

REFERENCES

Bostrom, N. (2014). Superintelligence: Paths, dangers, strategies. Oxford University Press.

Faraj, S., Pachidi, S., & Sayegh, K. (2018). Working and organizing in the age of the learning algorithm. Information and Organization, 28(1), 62–70. https://doi.org/10.1016/j.infoandorg.2018.02.005

Floridi, L. (2014). The fourth revolution: How the infosphere is reshaping human reality. Oxford University Press.

Kallinikos, J., Aaltonen, A., & Marton, A. (2013). The ambivalent ontology of digital artifacts. MIS Quarterly, 37(2), 357–370. https://doi.org/10.25300/MISQ/2013/37.2.02

Leonardi, P. M. (2021). COVID-19 and the new technologies of organizing: Digital exhaust, digital footprints, and artificial intelligence in the wake of remote work. Journal of Management Studies, 58(1), 249–253. https://doi.org/10.1111/joms.12648

Orlikowski, W. J., & Scott, S. V. (2015). The algorithm and the crowd: Considering the materiality of service innovation. MIS Quarterly, 39(1), 201–216. https://doi.org/10.25300/MISQ/2015/39.1.09

APPENDIX

Original article:

ABOUT THE AUTHOR

Paul C. Hong DISTINGUISHED UNIVERSITY PROFESSOR · UNIVERSITY OF TOLEDO

Paul C. Hong is a Distinguished University Professor and Chair of Information Systems and Supply Chain Management at the University of Toledo. His work focuses on leadership, governance, and decision-making in the AI era, integrating strategy, technology, and institutional trust. He has published extensively in leading academic journals and writes on how individuals and organizations navigate complexity, disruption, and global transformation.

© K-Global Scholars and Professionals Forum. All rights reserved. Content published in the K-GSP Forum may not be reproduced, distributed, or transmitted in any form without prior written permission from the K-GSP Forum, except for brief quotations with full attribution.