[SERIES] Ontology–Design Alignment in AI: From Philosophical Insight to Scalable Practice

[Ontology & AI Series #2] By Paul C. Hong · Distinguished University Professor, University of Toledo

EXECUTIVE SUMMARY

This article is the second installment in a multi-part series examining ontology and AI leadership, advancing the concept of ontology–design alignment mechanisms for practical AI implementation. Building on the conceptual foundation established in Part I, it translates the distinction between philosophical and computational ontology into structured frameworks that guide system design, governance, and leadership decision-making. The central argument is that aligning what is modeled with what is meaningful enables AI systems that are not only efficient and scalable, but also context-aware, ethically grounded, and resilient in complex environments.

Keywords: Ontological alignment; AI governance; computational ontology; philosophical ontology; AI system design

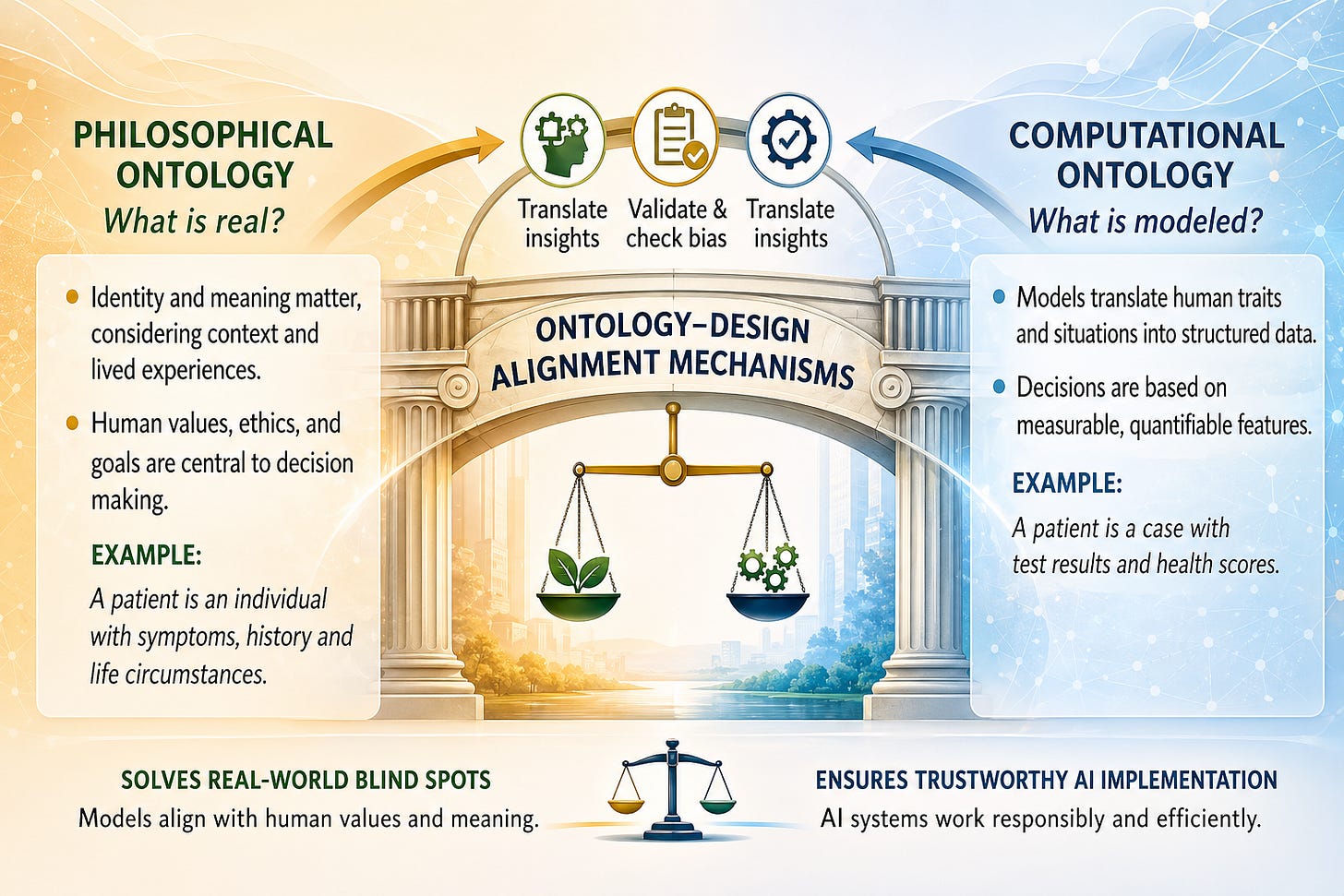

Figure 1. Ontology–Design Alignment Mechanisms

Building on the conceptual foundation established in prior work, this article moves from explanation to articulation. While the earlier discussion clarified the distinction between what is real and what is represented, the present framework focuses on how this distinction can be operationalized across system design, leadership, and governance.

INTRODUCTION

Imagine a hiring manager evaluating two candidates. An AI system ranks Candidate A higher based on structured data — education, years of experience, and skill keywords — while the manager recognizes that Candidate B demonstrates stronger adaptability, resilience, and cultural fit. This tension reveals a fundamental issue: the gap between what exists and matters in reality and what AI systems are able to represent and compute. This is precisely where the distinction between philosophical ontology and computational ontology becomes operationally significant.

Understanding this distinction is not merely theoretical; it provides immediate practical value for leaders, designers, and decision-makers working with AI systems. Philosophical ontology clarifies what matters — human identity, context, purpose, and meaning — while computational ontology determines how these dimensions are translated into data structures, variables, and decision rules. When integrated effectively, organizations can better diagnose system limitations, uncover hidden assumptions, and avoid reducing complex realities into overly simplified representations. This improves decision quality across domains such as hiring, healthcare, and supply chain management, where organizations must balance efficiency with fairness, accuracy with context, and scale with human impact (Davenport & Ronanki, 2018; Brynjolfsson & McAfee, 2017).

Ontological clarity thus becomes a practical capability, enabling leaders to critically interpret AI outputs and design systems aligned with real-world conditions. However, understanding alone is insufficient without disciplined ontology–design alignment mechanisms that translate meaning into system architecture, workflows, and governance processes. Without such alignment, organizations risk deploying AI systems that are efficient yet fragile; with it, they can build systems that are resilient, trustworthy, and adaptable in complex environments.

ONTOLOGY–DESIGN ALIGNMENT: FROM CONCEPT TO MECHANISM

Consider a hospital deploying an AI system to prioritize patient care. At the conceptual level, leaders want the system to reflect not just medical urgency, but also patient vulnerability, quality of life, and social context. Yet when implemented, the system relies primarily on measurable indicators — vital signs, lab results, and diagnosis codes. The gap between intention and execution illustrates the core challenge: not simply defining what matters, but ensuring that what matters is systematically translated into how systems are built and operate. Building on the distinction between philosophical and computational ontology, the challenge shifts from understanding to systematically connecting meaning with implementation in AI systems. This requires structured mechanisms that translate human meaning into design, validate representations, and govern decision-making across diverse contexts.

2.1 Philosophical Ontology as a Source of Meaning and Direction

Imagine a hiring panel debating between two candidates. One has a perfect résumé; the other shows resilience, adaptability, and strong interpersonal impact from nontraditional experiences. The discussion goes beyond data points into questions of potential, meaning, and long-term contribution — dimensions that cannot be easily quantified but are crucial to the organization’s future.

Philosophical ontology provides the foundational lens through which human reality is understood in its full complexity. It emphasizes that individuals are not merely collections of measurable attributes but are shaped by identity, purpose, relationships, and lived experience. In practical terms, this perspective helps organizations recognize what is often excluded in data-driven systems — context, intention, ethical considerations, and evolving human conditions, which align with broader discussions on ethics and human-centered AI (Floridi et al., 2018; Mittelstadt et al., 2016). By foregrounding these dimensions, philosophical ontology informs what should matter in system design, guiding the selection of variables, the framing of problems, and the interpretation of outcomes beyond purely quantitative metrics. In operational settings, this approach leads to more thoughtful problem definition by expanding evaluation criteria beyond narrow metrics — for instance, considering long-term potential in hiring, patient experience in healthcare, and resilience and stakeholder impact in supply chains. As a result, philosophical ontology serves as a directional compass that prevents AI systems from optimizing limited objectives while ensuring alignment with broader human values and institutional purpose.

2.2 Computational Ontology as a Structure for Execution and Scale

Consider the same hiring process once automated: the AI system converts candidates into structured profiles — education levels, years of experience, skill tags, and assessment scores — and applies consistent rules across thousands of applicants, producing rankings efficiently and at scale, so that what was once a nuanced discussion becomes a structured, repeatable computation. In this context, computational ontology operationalizes reality by translating selected aspects of it into structured representations that machines can process, defining entities, attributes, relationships, and rules that enable consistency, scalability, and automation across large datasets. This structured approach is essential for implementing AI systems at scale, allowing organizations to process complex information efficiently and generate repeatable, data-driven decisions; in domains such as finance, logistics, and digital platforms, computational ontology provides the backbone for predictive analytics, optimization models, and real-time decision systems, as emphasized in the economics and operationalization of AI systems (Varian, 2019).

However, the strength of computational ontology — its precision and efficiency — also introduces constraints through necessary simplification. By reducing reality into predefined variables and categories, it may exclude critical contextual or qualitative dimensions, raising concerns about model limitations and control, particularly when systems optimize objectives that are only partial representations of human intent — a core issue in the broader AI alignment problem (Russell, 2019; Bostrom, 2014). At the operational level, this creates risks when systems are deployed across diverse environments where conditions vary and cannot be fully anticipated. For instance, a highly optimized supply chain model may fail under unexpected disruptions, or a credit scoring system may overlook unique individual circumstances. Therefore, computational ontology must be continuously calibrated and complemented by mechanisms that reintroduce context, validate assumptions, and adapt to changing conditions. It serves as the execution engine of AI systems, but its effectiveness depends on how well it remains aligned with the broader realities it seeks to represent. Ontology–design alignment spans five core dimensions — human identity, reality representation, decision logic, knowledge boundaries, and purpose — where philosophical ontology centers meaning, context, and ethical intent, and computational ontology translates these into formal structures and measurable outputs. Misalignment across these dimensions can lead to oversimplification, bias, and false precision, requiring deliberate design and governance interventions as emphasized in prior work on ethical and accountable AI systems (Floridi et al., 2018; Mittelstadt et al., 2016).

DEFINE–DESIGN–DELIVER (3D) TRANSLATION FRAMEWORK

Consider a global logistics company responding to unexpected supply chain disruptions. In the early stages, leaders must define what truly matters — not just cost and speed, but resilience, supplier reliability, and geopolitical risk. Next, engineers design models that translate these priorities into variables such as lead-time variability, risk scores, and alternative routing options. Finally, the system delivers decisions in real time — rerouting shipments, reallocating inventory, and balancing efficiency with stability. When these stages are aligned, the system performs effectively under pressure; when they are not, even sophisticated models can fail in unpredictable ways.

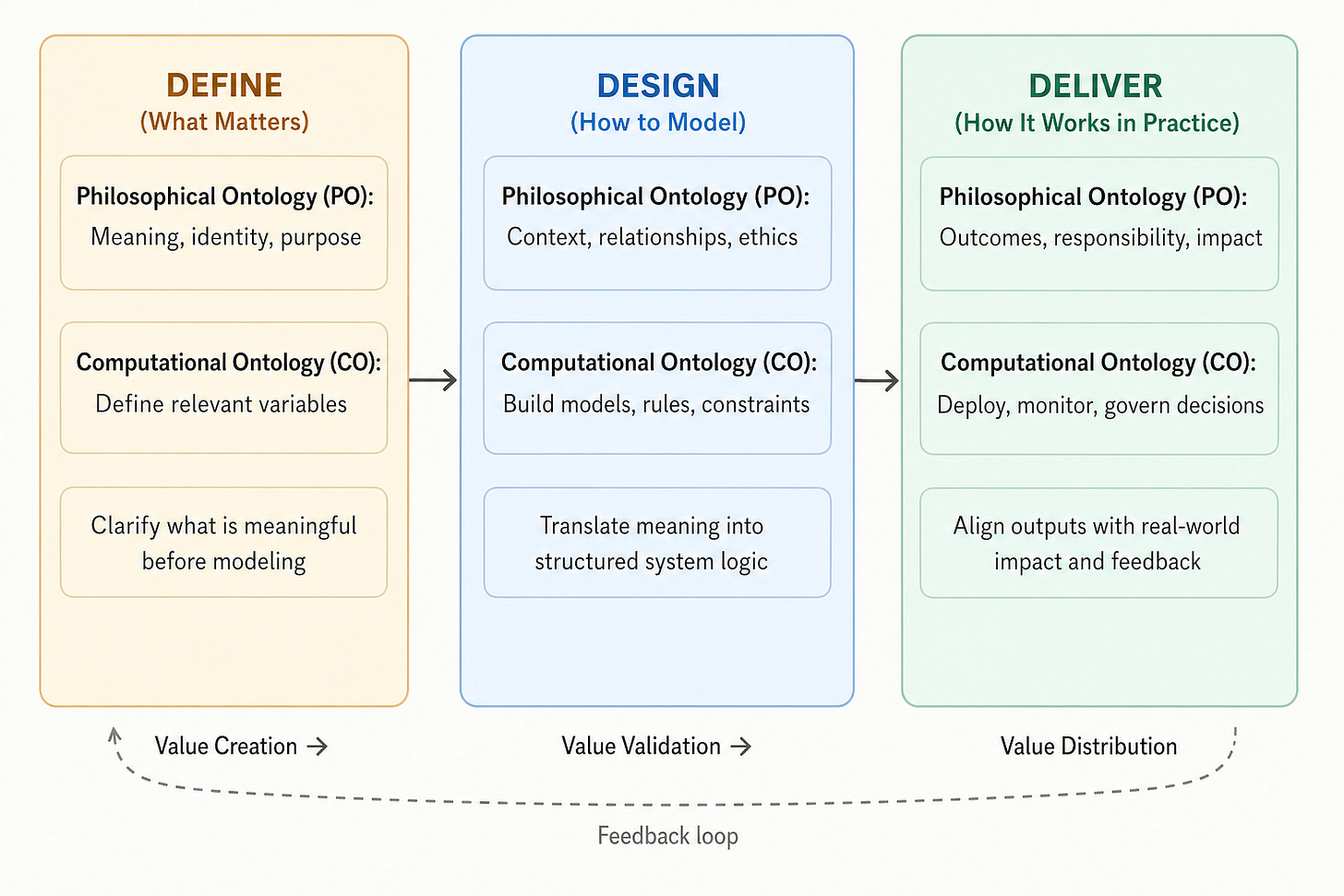

To operationalize the relationship between philosophical ontology and computational ontology, this article proposes a three-stage translation framework — Define, Design, and Deliver (3D) — that provides a systematic pathway for translating meaning into scalable AI execution. While philosophical ontology clarifies what is meaningful in human reality and computational ontology structures what is modeled in AI systems, the key challenge lies in bridging the two in a repeatable way, which the 3D framework addresses by guiding how meaning is identified, encoded, and implemented in practice, building on prior work linking AI design to organizational performance and decision-making (Brynjolfsson & McAfee, 2017; Davenport & Ronanki, 2018). Rather than treating ontology as static, the framework emphasizes continuous alignment across stages — defining what matters, designing how it is represented, and delivering outcomes that balance performance with responsibility — ensuring AI systems remain technically robust, contextually grounded, and ethically aligned.

Figure 2. Ontology–Design Alignment Framework: From Meaning to Execution

Figure 2 presents the Ontology–Design Alignment Framework as a clear three-stage progression — Define, Design, and Delivery — that translates philosophical ontology into actionable computational ontology. The left stage (Define) emphasizes identifying what is meaningful in human reality, such as identity, purpose, and context, which then informs the selection of relevant variables. The middle stage (Design) converts these insights into structured models, rules, and system logic, ensuring that meaning is not lost in simplification. The right stage (Delivery) focuses on real-world execution, where systems are deployed, monitored, and governed to align outcomes with intended impact. The figure also highlights a continuous feedback loop across stages and a value chain moving from value creation to validation and distribution, reinforcing that effective AI systems require ongoing alignment between what is meaningful and what is modeled.

3.1 Define: Clarifying Meaning, Scope, and Relevance

The Define stage focuses on identifying what is meaningful within a given problem context. Drawing from philosophical ontology, this stage emphasizes human identity, purpose, context, and values as the foundation for system design. Rather than beginning with available data or existing models, the Define phase asks a more fundamental question: What aspects of reality should be represented, and why do they matter? This shifts the starting point of AI development from data availability to problem relevance, ensuring that critical dimensions are not excluded prematurely.

At an operational level, defining scope and meaning helps organizations avoid mis-specified problems and incomplete representations. For example, in hiring decisions, defining success solely through past performance metrics may overlook adaptability, learning potential, or cultural contribution. In healthcare, defining outcomes only in terms of clinical indicators may neglect patient well-being and lived experience. By expanding the scope of consideration, the Define stage improves the quality of subsequent modeling and reduces the risk of systemic blind spots. It establishes the conceptual boundary conditions within which computational models will operate.

3.2 Design: Translating Meaning into Structured Models

The Design stage translates the insights from the Define phase into computational ontology — structured representations that AI systems can process. This involves selecting variables, defining relationships, constructing models, and embedding rules that reflect the intended meaning identified earlier. At this stage, philosophical considerations must be carefully encoded into system architecture, ensuring that context, relationships, and constraints are not lost in simplification.

The challenge in the Design stage lies in balancing precision with representation. While computational systems require clarity and structure, overly narrow definitions can distort reality and lead to suboptimal or biased outcomes. For instance, in credit scoring, reducing risk assessment to a limited set of financial indicators may exclude important contextual factors such as life transitions or temporary disruptions. Effective design therefore incorporates mechanisms such as contextual variables, layered modeling, and human-in-the-loop validation to preserve alignment. This stage functions as the translation engine, converting meaning into model logic while maintaining fidelity to real-world complexity.

3.3 Deliver: Aligning Outcomes with Real-World Impact and Governance

The Deliver stage focuses on how AI systems function in real-world settings, emphasizing not just deployment but ongoing monitoring, evaluation, and accountability to ensure outputs align with intended outcomes in dynamic environments. At scale, it requires continuous calibration between system behavior and human expectations, such as assessing supply chain models for resilience beyond efficiency or evaluating content moderation systems for fairness and contextual sensitivity. By incorporating feedback loops and governance mechanisms, this stage ensures that value is responsibly created and sustained over time, reinforcing alignment across definition, design, and real-world impact so AI systems remain trustworthy, adaptive, and effective.

Figure 2 illustrates how ontology–design alignment mechanisms translate philosophical understanding into computational systems through three stages — Define, Design, and Delivery — enabling practical problem solving and value creation in AI applications. The 3D framework shows how meaning becomes model and model becomes action — through structured translation, disciplined design, and responsible delivery.

ILLUSTRATIVE EXAMPLES: APPLYING THE 3D FRAMEWORK IN PRACTICE

To demonstrate the practical value of the 3D (Define–Design–Deliver) framework, this section presents illustrative examples across key domains where AI systems operate at scale. While the previous section outlined how philosophical ontology is translated into computational ontology through structured mechanisms, the focus here is on how these mechanisms function in real-world contexts. Each example follows the same three-stage logic — Define, Design, and Deliver — highlighting how alignment (or misalignment) shapes outcomes.

Rather than providing exhaustive case studies, these illustrations are designed to show recurring patterns across domains. They reveal that the effectiveness of AI systems depends not only on technical sophistication but on how well meaning is defined, translated into models, and aligned with real-world outcomes. Across contexts, the absence of alignment often leads to efficient yet incomplete decisions, while strong alignment enables systems that are both scalable and contextually grounded.

4.1 Hiring and Talent Selection: Beyond Résumé-Based Modeling

In hiring systems, the Define stage determines whether candidates are viewed narrowly as static profiles of credentials or more broadly as dynamic individuals with potential, adaptability, and growth trajectories. A limited definition focuses on measurable attributes like education and past performance, while a broader perspective captures contextual development and future capability, shaping what is considered valuable before modeling begins.

The Design stage encodes these assumptions into algorithmic systems, where traditional models rely on keyword matching and historical data, often reinforcing existing biases. In the Delivery stage, automated screening can exclude high-potential candidates who fall outside predefined patterns, but when aligned with contextual data and human judgment, these systems support more balanced decisions that enhance both performance and diversity.

4.2 Healthcare Decision Systems: From Data Accuracy to Patient-Centered Care

In healthcare, the Define stage determines whether patients are viewed narrowly as clinical data points or more holistically as individuals with lived experiences, values, and contextual factors. A purely computational definition emphasizes symptoms, biomarkers, and treatment outcomes, while a broader perspective incorporates quality of life and personal circumstances, shaping how diagnoses and care decisions are framed.

The Design stage translates these definitions into diagnostic algorithms and predictive models that enhance accuracy but may miss critical patient-specific nuances if context is underrepresented. In the Delivery stage, real-world implementation reveals that alignment is essential — systems must produce not only accurate but meaningful recommendations, and those integrating clinical data with patient input and physician judgment better support human-centered care, improving outcomes and trust.

4.3 Supply Chain Management: From Optimization to Resilience

In supply chain systems, the Define stage shapes whether the system is treated narrowly as a network of flows and costs or more broadly as a socio-technical system embedded in economic and geopolitical realities. A narrow definition emphasizes efficiency and cost minimization, while a broader perspective incorporates resilience, adaptability, and stakeholder relationships, fundamentally influencing how success is measured.

The Design stage translates these assumptions into optimization models and analytics tools, which perform well under stable conditions but can fail when uncertainty, interdependencies, and disruptions are underestimated. The Delivery stage then exposes these limitations in real-world shocks, where efficiency-driven systems often break down, whereas those incorporating scenario planning, redundancy, and adaptive rules demonstrate greater resilience, highlighting the need to align computational models with real-world complexity.

AI succeeds not by what it computes, but by how well it captures what truly matters.

CONCLUSION

Ontology–design alignment frames AI development as the integration of meaning and computation, where philosophical ontology defines what matters and computational ontology determines how it is operationalized at scale. Using structured approaches like the 3D (Define–Design–Deliver) framework, organizations can systematically translate human values and contextual realities into adaptive, scalable system architectures. This alignment mitigates bias and ethical blind spots while strengthening governance, accountability, and trust, emphasizing that AI success depends not just on efficiency but on how well it reflects meaningful aspects of human reality. In this sense, the future of AI is not merely a technical challenge, but an alignment challenge — ensuring that what systems optimize truly reflects what societies value.

References

Bostrom, N. (2014). Superintelligence: Paths, dangers, strategies. Oxford University Press.

Brynjolfsson, E., & McAfee, A. (2017). Machine, platform, crowd: Harnessing our digital future. W. W. Norton & Company.

Davenport, T. H., & Ronanki, R. (2018). Artificial intelligence for the real world. Harvard Business Review, 96, 108–116.

Floridi, L., Cowls, J., Beltrametti, M., Chatila, R., Chazerand, P., Dignum, V., Luetge, C., Madelin, R., Pagallo, U., Rossi, F., Schafer, B., Valcke, P., & Vayena, E. (2018). AI4People — An ethical framework for a good AI society: Opportunities, risks, principles, and recommendations. Minds and Machines, 28(4), 689–707.

Mittelstadt, B. D., Allo, P., Taddeo, M., Wachter, S., & Floridi, L. (2016). The ethics of algorithms: Mapping the debate. Big Data & Society, 3(2), 1–21.

Russell, S. (2019). Human compatible: Artificial intelligence and the problem of control. Viking.

Varian, H. R. (2019). Artificial intelligence, economics, and industrial organization. In A. Agrawal, J. Gans, & A. Goldfarb (Eds.), The economics of artificial intelligence: An agenda (pp. 399–422). University of Chicago Press.

Original Document:

About the Author

Distinguished Professor, Dr. Paul Hong — University of Toledo

Paul C. Hong is a Distinguished University Professor and Chair of Information Systems and Supply Chain Management at the University of Toledo. His work focuses on leadership, governance, and decision-making in the AI era, integrating strategy, technology, and institutional trust. He has published extensively in leading academic journals and writes on how individuals and organizations navigate complexity, disruption, and global transformation.

© K-Global Scholars and Professionals Forum. All rights reserved. Content published in the K-GSP Forum may not be reproduced, distributed, or transmitted in any form without prior written permission from the K-GSP Forum, except for brief quotations with full attribution.