Who Will Govern AI?: Seoul's Bid for a UN Hub in a Fragmenting World

Paul C. Hong Distinguished University Professor, University of Toledo

EXECUTIVE SUMMARY

As artificial intelligence reshapes economic power, security, and societal trust, the global contest is shifting from technological dominance to governance leadership amid a rapidly fragmenting landscape. The absence of shared standards across the United States, China, and Europe is emerging as a systemic risk¹, highlighting the need for coordinated global frameworks. In this context, Seoul’s bid to host a United Nations-affiliated AI Hub signals a transition from capability-building to rule-shaping — emphasizing ethical alignment, risk management, and a distributed architecture of governance that will ultimately determine the sustainability of AI across borders.

AI governance is no longer a technical question — it is a geopolitical contest.

1 Introduction

Artificial intelligence has entered a decisive phase, shifting from a race for computational scale to a contest over governance. As AI permeates finance, healthcare, defense, and public administration², its influence extends beyond efficiency into the structure of society. The central challenge is no longer how to build intelligent systems, but how to align them with shared norms, manage cross-border risks, and sustain trust.

This shift reflects a deeper transformation in the nature of technological power. Unlike earlier waves of digital innovation that were largely absorbed within market mechanisms and national regulatory frameworks, AI operates as a general-purpose system³ integrating data flows, decision-making processes, and institutional behavior across sectors and borders. Its dual capacity to generate opportunity and amplify risk elevates governance from a secondary concern to a primary determinant of long-term stability, particularly as AI becomes embedded in critical infrastructures.

The global landscape of AI governance is increasingly fragmented. The United States, China, and the European Union are advancing distinct models⁴ shaped by differing priorities around innovation, control, privacy, and ethics, resulting in parallel ecosystems with incompatible standards and regulatory assumptions. This divergence produces a patchwork system in which interoperability weakens, compliance becomes more complex, and systemic vulnerabilities increase, reflecting broader geopolitical tensions rather than movement toward a unified framework.

At the same time, this fragmentation unfolds alongside deep technological interdependence. AI supply chains — spanning semiconductors, cloud infrastructure, data ecosystems, and software platforms — remain globally interconnected, creating a structural tension between national autonomy and global reliance. Historically, such conditions have required institutional coordination, as seen in the postwar order anchored by the United Nations and hubs such as Geneva. Today, AI presents a comparable challenge, calling for new mechanisms of alignment, within which Seoul’s bid to host a UN AI Hub signals an emerging shift toward coordination-driven global governance.

2 Fragmentation and Divergence in Global AI Governance

Artificial intelligence is unfolding across a fragmented landscape of competing governance systems as nations embed institutional priorities into regulatory design. This shift has transformed AI governance into a domain of geopolitical differentiation, producing systemic divergence rather than mere variation⁵. These competing governance logics are creating distinct ecosystems with limited interoperability and rising regulatory friction.

2.1 Diverging Models of AI Governance

While prior frameworks have categorized global AI governance into competing national models⁶, this analysis extends the discussion by focusing on the coordination gap that emerges between these systems and the institutional mechanisms required to bridge them.

The global AI system remains deeply interconnected, as cross-border data flows, multinational technology firms, and globally distributed supply chains continue to bind otherwise divergent governance systems. Firms must navigate multiple regulatory regimes simultaneously, governments must manage cross-border risks, and institutions face growing challenges in coordinating standards across incompatible frameworks.

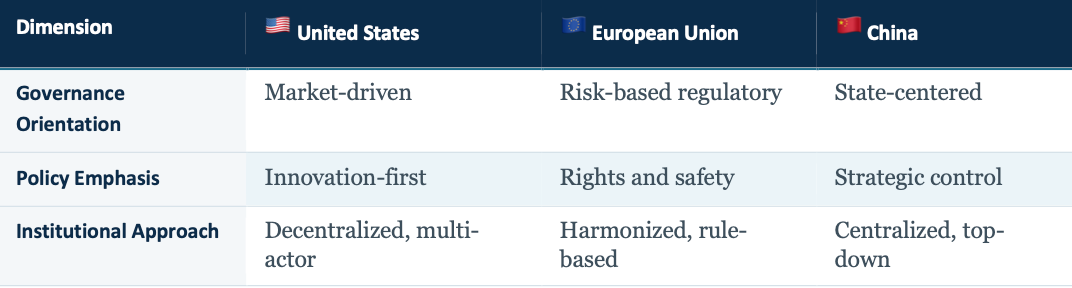

Table 1. Comparative AI Governance Models Across Major Powers

The United States relies on a decentralized, market-driven ecosystem that prioritizes innovation and experimentation, often at the cost of regulatory coherence. China adopts a state-centered model that aligns AI development with national strategic objectives, enabling coordinated deployment but raising concerns about transparency. The European Union emphasizes a comprehensive regulatory framework grounded in risk assessment and fundamental rights — providing normative clarity while potentially constraining speed.

Together, these models represent competing visions of how intelligence should be governed. As they solidify, they generate parallel systems with limited alignment, reinforcing divergence as the defining feature of the global AI landscape.

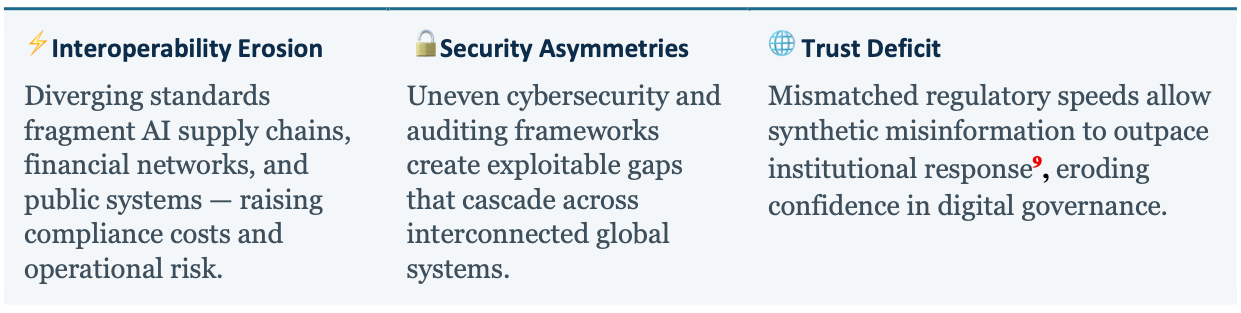

2.2 Systemic Risks of Fragmentation

The fragmentation of AI governance introduces systemic risks that accumulate across interconnected systems⁷. Fragmentation also deepens global inequality in AI capability and governance capacity⁸. Advanced economies are better positioned to develop, deploy, and regulate AI systems, while many developing countries face significant constraints. Without coordinated international efforts, the benefits of AI will remain unevenly distributed, exacerbating existing global divides.

⚡ Interoperability Erosion — Diverging standards fragment AI supply chains, financial networks, and public systems — raising compliance costs and operational risk.

🔓 Security Asymmetries — Uneven cybersecurity and auditing frameworks create exploitable gaps that cascade across interconnected global systems.

🌐 Trust Deficit — Mismatched regulatory speeds allow synthetic misinformation to outpace institutional response⁹, eroding confidence in digital governance.

“Fragmentation is not merely a regulatory condition — it becomes an operational constraint that reshapes how global systems function.”

3 Institutional Coordination for Global AI Governance

The accelerating divergence of AI systems has created a widening governance gap, as national strategies evolve without cross-border alignment. At the same time, deep interdependence — driven by global data flows and supply chains — renders purely national solutions insufficient. In this context, the United Nations is uniquely positioned to enable coordination¹⁰ through a UN AI Hub that translates shared principles into operational frameworks.

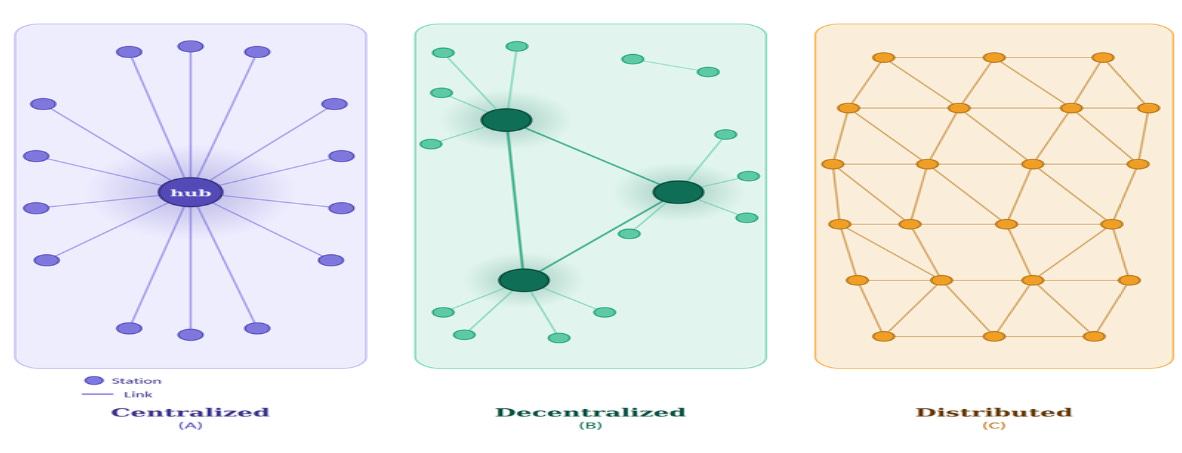

Figure 1. Network Topologies: Centralized · Decentralized · Distributed Adapted from Paul Baran, On Distributed Communications (RAND Corporation, 1964).

(A) Centralized — All nodes connect to a single hub. Every message routes through the center. Highly efficient and simple to manage — but a single point of failure. Destroy the hub, and the entire network collapses.

(B) Decentralized — Multiple sub-hubs each manage a cluster of nodes, with hubs linked to one another. More resilient than centralized — failure of one hub isolates its cluster but not the whole network.

(C) Distributed — Every node connects to multiple peers with no hierarchy. Messages can route around damage. Maximum resilience — the basis of Paul Baran’s 1964 design for a nuclear-survivable communications network.

As illustrated in Figure 1, effective AI governance depends on a distributed architecture in which national systems remain autonomous but are connected through a coordinating hub that enables alignment across risk, inclusion, and trust.

A central challenge for any UN-centered AI governance initiative is the participation of major powers, whose strategic competition increasingly shapes the global AI landscape. Divergent regulatory philosophies and geopolitical tensions may limit willingness to fully align under a shared framework. However, a distributed governance model mitigates this constraint by allowing partial alignment rather than full convergence, enabling major powers to retain autonomy while still participating in targeted coordination on systemic risks, interoperability standards, and trust mechanisms.

3.1 The Role of the United Nations

The United Nations provides a foundational platform for AI governance through its institutional legitimacy and convening power. Unlike national governments or regional bodies, the UN operates as a global forum where diverse actors can engage in dialogue and negotiation. This inclusiveness is essential: governance challenges extend beyond technical considerations to encompass ethical, social, and political dimensions.

One of the UN’s primary strengths lies in its ability to establish normative frameworks. Through declarations, guidelines, and multilateral agreements, it can articulate principles — from transparency and accountability to fairness and human rights — that provide a common reference point across diverse governance systems. While not always legally binding, they shape expectations and influence policy across jurisdictions.

The UN’s role in AI governance is not to enforce uniformity but to enable alignment. It operates as a facilitator rather than a regulator, creating the conditions for cooperation among actors with differing priorities and capabilities.

3.2 Coordinating Risk, Inclusion, and Trust

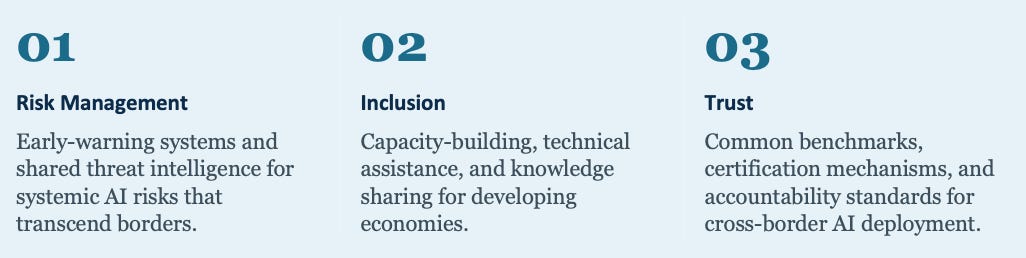

A UN AI Hub would operationalize global coordination across three interrelated dimensions:

01 Risk Management — Early-warning systems and shared threat intelligence for systemic AI risks that transcend borders.

02 Inclusion — Capacity-building, technical assistance, and knowledge sharing for developing economies.

03 Trust — Common benchmarks, certification mechanisms, and accountability standards for cross-border AI deployment.

Together, these functions define a governance model based on alignment rather than control. The UN AI Hub would not replace existing institutions or impose a centralized framework — it would connect diverse systems within a coordinated architecture, addressing risk, inclusion, and trust in an integrated manner.

4 Seoul’s Strategic Positioning in Global AI Governance

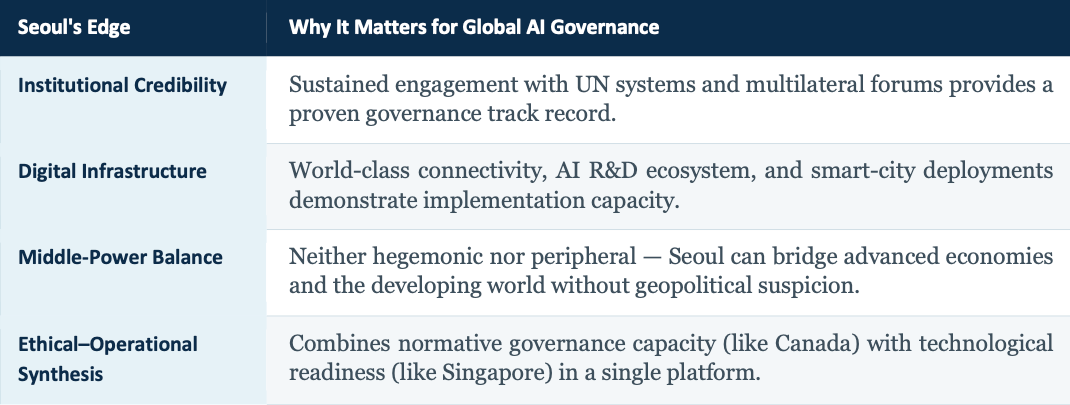

South Korea’s proposal reflects a shift from technological capability toward governance leadership, positioning Seoul as a coordination node across fragmented systems. By combining digital infrastructure with institutional capacity, it offers a platform where governance principles can be operationalized. In a fragmented yet interdependent world, this signals a move from dominance toward alignment and connectivity. Seoul’s bid is not about hosting prestige — it is about occupying a distinctive structural position in global AI architecture.

4.1 Institutional Foundations and Global Engagement

South Korea has demonstrated sustained engagement with the United Nations system¹¹ and related international institutions, reflecting both commitment and readiness to assume a larger governance role. Its experience hosting global forums, participating in multilateral initiatives, and implementing national AI strategies provides a strong institutional base for operationalizing coordination at scale.

Compared to traditional host models, Seoul’s institutional foundation offers a complementary balance. Switzerland provides longstanding diplomatic credibility; Singapore offers operational efficiency; Canada contributes normative leadership in ethics and research. Seoul’s advantage lies in synthesizing these dimensions into a cohesive platform — institutional legitimacy with implementation capacity.

Seoul’s bid positions South Korea as a middle-power coordination bridge in global AI governance.

This institutional readiness is reinforced by South Korea’s strong performance in global governance benchmarks. Korea consistently ranks among the top countries in the United Nations E-Government Development Index and OECD digital government assessments, reflecting advanced administrative capacity, digital integration, and public-sector innovation. These indicators provide measurable evidence that Seoul is not only conceptually positioned but operationally capable of supporting a global AI governance hub.

4.2 Seoul as a Middle-Power Coordination Bridge

As a middle power, South Korea occupies a distinctive position within the global system, enabling it to engage across geopolitical divides without being perceived as hegemonic. It maintains strong economic and technological ties with major powers while preserving the flexibility to connect with emerging economies.

Seoul’s differentiation lies not in surpassing existing hubs, but in connecting them within a distributed architecture — reinforcing its potential as a focal node in a future-oriented global governance system.

Seoul’s Edge in Global AI Governance

Compared to other middle-power candidates, Seoul offers a more integrated governance proposition. Canada provides strong normative leadership in AI ethics but lacks the same level of digital infrastructure integration; the Netherlands offers institutional credibility within the European system but is regionally anchored; and the UAE demonstrates rapid technological deployment but faces limitations in global governance legitimacy. Seoul’s advantage lies in combining institutional credibility, technological capacity, and geopolitical balance within a single platform, allowing it to function not only as a host location but as an active coordination node in the global AI governance system.

5 Conclusion

The emergence of artificial intelligence as a foundational system has shifted the global challenge from innovation to governance. Deepening fragmentation across major powers increases systemic risk while constraining the potential for shared progress — making alignment, interoperability, and institutional cooperation essential to sustaining global stability.

The United Nations provides a critical platform for this transition, yet its effectiveness will depend on the ability to operationalize coordination through credible and capable nodes. Among these, Seoul stands out by combining technological capability, institutional readiness, and geopolitical balance.

In this context, the future of AI will be shaped less by where innovation originates and more by where coordination can be sustained — because in a world of intelligent systems, enduring advantage belongs to those who can align power with trust. The emergence of distributed governance hubs will define this transition, linking national systems into a resilient global architecture where coordination, not dominance, determines long-term stability.

“AI will not be governed by power alone, but by the systems of trust we choose to build.”

ABOUT THE AUTHOR

Paul C. Hong · Distinguished University Professor, University of Toledo

Paul C. Hong is a Distinguished University Professor and Chair of Information Systems and Supply Chain Management at the University of Toledo. His work focuses on leadership, governance, and decision-making in the AI era, integrating strategy, technology, and institutional trust. He has published extensively in leading academic journals and writes on how individuals and organizations navigate complexity, disruption, and global transformation.

Notes

OECD. (2024). OECD AI policy observatory: Governing AI in a global context; World Economic Forum. (2024). The global risks report 2024.

McKinsey Global Institute. (2023). The economic potential of generative AI: The next productivity frontier.

Agrawal, A., Gans, J., & Goldfarb, A. (2022). Power and prediction: The disruptive economics of artificial intelligence. Brynjolfsson, E., & McAfee, A. (2014). The second machine age.

European Commission. (2024). Artificial Intelligence Act (EU 2024/1689). White House. (2023). Executive Order on AI (E.O. 14110). State Council of China. (2023). Measures for generative AI services.

Baldwin, R. (2016). The great convergence. International Monetary Fund. (2023). Geoeconomic fragmentation and the future of multilateralism.

Roberts, H., Hine, E., Taddeo, M., & Floridi, L. (2024). Global AI governance: Barriers and pathways forward. International Affairs, 100(3), 1275–1286.

Bank for International Settlements. (2023). Annual economic report 2023. World Bank. (2023). Digital progress and trends report.

UNDP. (2024). Human development report: Technology and inequality.

Pew Research Center. (2024). Public engagement with AI and digital platforms. World Economic Forum. (2024). The global risks report 2024.

United Nations. (2023). Governing AI for humanity: Interim report. United Nations. (2024). Governing AI for humanity: Final report.

Government of the Republic of Korea. (2023). National strategy for artificial intelligence. OECD. (2023). Going digital in Korea.

Original Document:

© K-Global Scholars and Professionals Forum. All rights reserved. Content published in the K-GSP Forum may not be reproduced, distributed, or transmitted in any form without prior written permission from the K-GSP Forum, except for brief quotations with full attribution.